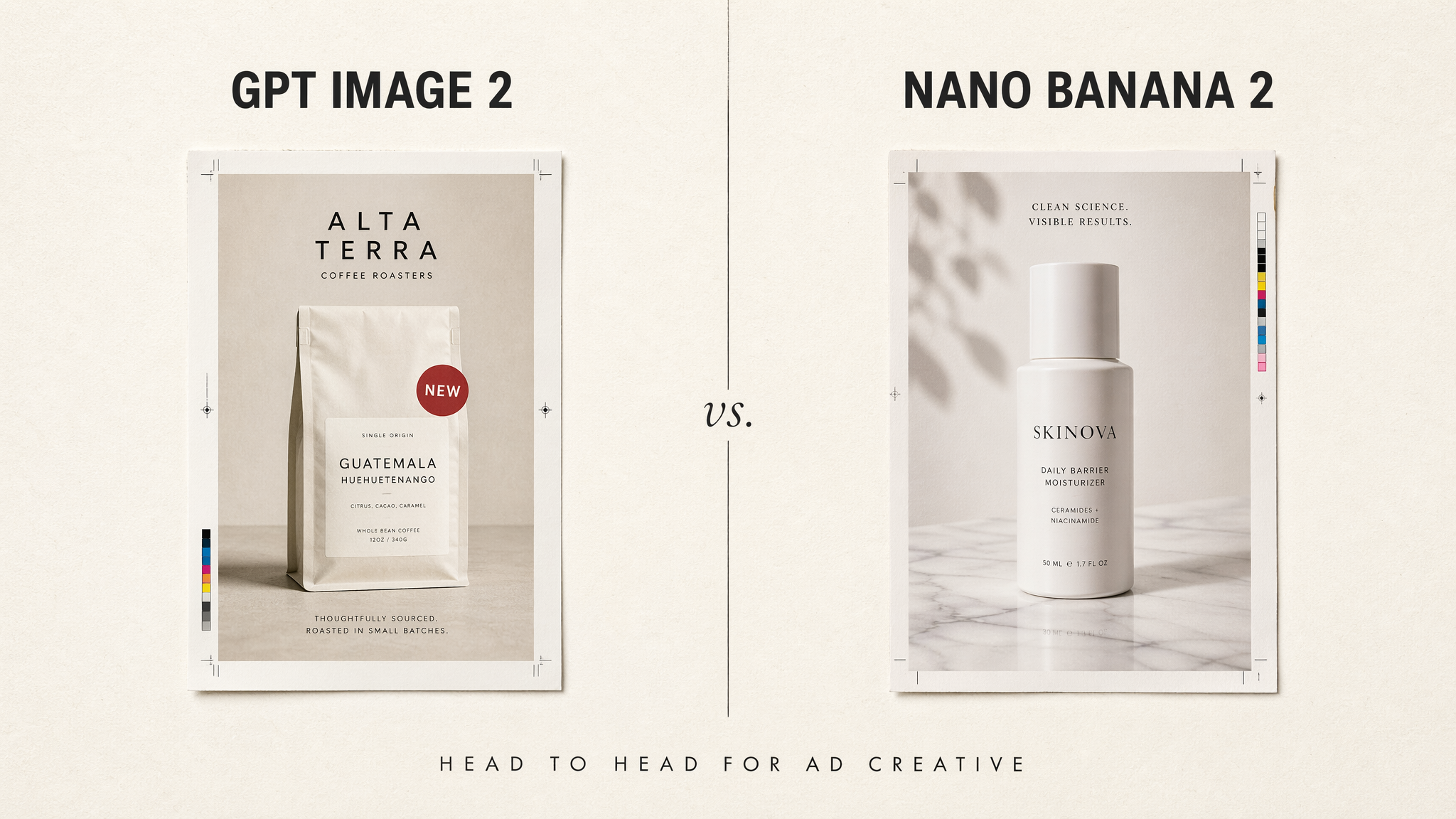

OpenAI shipped GPT Image 2 on April 21, 2026 — just a few days before this post went out — and it immediately re-opened a question every performance marketer thought they had answered: do I switch from Nano Banana 2, or do I keep what I have? The two models are genuinely different tools — built by different labs, trained with different priorities, exposed through different APIs — and the "best" one for your next campaign depends almost entirely on which bottleneck is hurting your creative pipeline today.

This guide is the honest comparison I wish existed when GPT Image 2 dropped. We ran the same seven briefs through both models' official APIs (OpenAI v1/images/generations for GPT Image 2; Google's gemini-3.1-flash-image-preview for Nano Banana 2) and looked at the things that actually matter for paid ads: in-image typography, brand and product fidelity, character consistency across a campaign, iteration speed, aspect ratio coverage, directability, and cost. Every image below is a raw model output — no retouching. At the end, I share the hybrid workflow our team now uses in production.

TL;DR — the one-sentence verdict

> GPT Image 2 is the typography, reasoning, and scene-direction model. Nano Banana 2 is the character-consistency, multi-aspect-ratio, and fast-iteration model. Performance teams shipping 100+ variants a month should use both — and let creative strategy, not model loyalty, decide the split.

Why the model choice matters (more than most teams admit)

Meta's own research puts creative quality at up to 56% of auction outcomes — larger than targeting, bidding, and placement combined. Creative is the lever. And at scale, the image model is the factory. Pick the wrong one for the job and you will feel it in three places:

- Rejection rate. Text that renders wrong, products that "almost" match the brand, logos that drift — these fail QA before they fail auctions.

- Iteration cost. A model that cannot edit its own output forces you to regenerate from scratch every round. At 100 variants × 3 revisions, that math bleeds hours.

- Creative fatigue. TikTok creative burns out in 7 days. Meta top performers lose 20–30% effectiveness in two weeks. The only defense is structured volume — and volume is gated by the model's throughput and directability.

Once you frame the decision this way, "which model is better" becomes "which model is better at the specific thing blocking my pipeline." Let's look at those things one by one.

The two models, side by side

| Dimension | GPT Image 2 (OpenAI) | Nano Banana 2 (Google) |

|---|---|---|

| Released | April 21, 2026 — successor to gpt-image-1.5 | February 26, 2026 — successor to Nano Banana Pro (Gemini 3 Pro Image, Nov 2025) |

| Model ID | gpt-image-2 | gemini-3.1-flash-image-preview |

| Core strength | Typography, native reasoning, instruction following | Character consistency, multi-aspect-ratio coverage, conversational editing |

| Native sizes | 1024×1024, 1024×1536, 1536×1024 (low / medium / high quality) | 0.5K (512px), 1K (default), 2K, 4K |

| Aspect ratios | 1:1, 3:2, 2:3 | 1:1, 2:3, 3:2, 3:4, 4:3, 4:5, 5:4, 9:16, 16:9, 21:9, 1:4, 4:1, 1:8, 8:1 (14 total) |

| Reference images | Supported via v1/images/edits (image + optional mask) | Supported natively in the generation call; up to 5 consistent characters + 14 objects |

| Editing | Single-turn edit endpoint with image and optional mask | Multi-turn conversational editing in the same session |

| Reasoning / "thinking" | Yes — Thinking mode adds web search, multi-image batching (up to 8), and output self-verification | No equivalent reasoning mode; bias toward speed |

| Watermark | C2PA content credentials | SynthID watermark + C2PA content credentials |

| API style | Sync REST v1/images/generations and v1/images/edits | Sync generateContent; multi-turn conversation supported |

| OpenAI's own profile | "Performance: Highest; Speed: Medium" | Positioned as the "Flash" speed tier of Gemini 3.1 image generation |

Sources: OpenAI's gpt-image-2 model page, OpenAI's ChatGPT Images 2.0 launch post, Google's Nano Banana 2 announcement, Google's gemini-3.1-flash-image-preview model page.

A few of these rows deserve more than a cell.

Round 1 — In-image text and typography

Winner: GPT Image 2, decisively.

GPT Image 2 — Same "ORIGIN COLLECTION / Ethiopia Yirgacheffe / SHOP NOW" brief.

Nano Banana 2 — Same brief, same aspect, same resolution.

This is where GPT Image 2 inherits OpenAI's clearest advantage. The gpt-image-1 family already set the bar for legible, kerned, correctly spelled text inside an image — and TechCrunch's launch coverage of ChatGPT Images 2.0 called out text generation as the most visible jump over the prior version. In the outputs above, the GPT Image 2 rendering lays out a full brand pack: complete coffee-roaster mark, secondary label copy, sourcing disclaimers, the works. Nano Banana 2 nails the headline and the CTA button, but lands on a more stripped-down product composition.

Nano Banana 2 is still perfectly usable for short headlines, ticker-style motion lines, and single-word hero callouts — Google's own launch post highlights "precise instruction following" and improved text rendering over the Pro tier. But for anything with dense copy — a Black Friday flyer, a multi-line product claim, a pricing callout — GPT Image 2 needs fewer retries on our production briefs. If burned-in text is a requirement (and for most static social ads and display banners, it is), this round alone is often enough to justify GPT Image 2.

Round 2 — Brand and product fidelity

Winner: Nano Banana 2, by a clear margin.

GPT Image 2 — "AURELIA" matte-white serum bottle on marble, brief described by text only.

Nano Banana 2 — Same prompt, same parameters.

This is Nano Banana 2's home turf. Google's launch post specifically calls out "fidelity of up to 14 objects in a single workflow" and multi-reference image inputs as a core capability — and in practice, you can hand Nano Banana 2 your actual pack shot, brand color swatches, and lifestyle references, and the model anchors on them. That is a qualitatively different capability than text-only prompting for a specific branded product.

GPT Image 2 does accept reference images, but through a different endpoint — v1/images/edits, which takes an existing image plus an optional mask and an edit prompt. That is the right tool for inpainting and surgical changes, but less suited for "generate this exact bottle in eight new lifestyle backgrounds" where you want reference-conditioned generation, not a mask-based edit. For that job, Nano Banana 2's first-class reference-image input wins.

If brand fidelity is the blocker, Nano Banana 2 is the answer — ideally with your pack shot + hero lifestyle frame + brand color swatch as the reference inputs.

Round 3 — Character consistency across a campaign

Winner: Nano Banana 2, the only real answer.

GPT Image 2 — Editorial portrait at a café window.

Nano Banana 2 — Same brief, same aspect.

Ad campaigns live and die on recurring characters. The same model, the same creator persona, the same mascot — across Reels, Stories, square feed, and TikTok. Nano Banana 2's launch post states directly that it can "maintain character resemblance of up to five characters" across a workflow. In practice, a single-character campaign with a reference frame is extremely stable, and two-character storylines (founder + customer, influencer + product) hold up across many follow-up generations before drift appears.

GPT Image 2 handles scene composition well — as the single frames above show, both models produce credible editorial portraits — but OpenAI has not published an equivalent character-consistency spec, and the v1/images/edits workflow is oriented toward masked edits of a single input, not toward multi-reference persona locking. If you need "the same woman holding the same tote bag across four seasons," Nano Banana 2 is built for it; GPT Image 2 is not.

Round 4 — Editing and iteration

Winner: Nano Banana 2, on workflow ergonomics.

GPT Image 2 — A single prompt asking for the "IDENTICAL bottle" in two different scenes.

Nano Banana 2 — Same test, same brief.

Both models ship editing. The difference is the loop.

- GPT Image 2 exposes a distinct edit endpoint,

v1/images/edits. You submit an input image, an optional mask, and a prompt. It returns a new image. Great for inpainting, swapping elements in a defined region, or extending a canvas. But each round is a one-shot call. - Nano Banana 2 uses its main

generateContentendpoint as a multi-turn conversation. Generate a frame, then in the same session say "make the lighting warmer, swap the background to a neon alley, keep everything else identical" — and the model edits in place, retaining context.

For a performance team that treats each creative as a single frame that either ships or doesn't, this matters less. For a brand team that iterates three to five rounds per hero asset, conversational editing saves meaningful time.

Round 5 — Aspect ratios and platform placements

Winner: Nano Banana 2, on coverage.

GPT Image 2 — 3:2 is the closest native ratio to a 16:9 banner.

Nano Banana 2 — Native 16:9 rendering at the same brief.

Paid placements are an aspect-ratio menagerie: 1:1 (feed), 4:5 (Meta recommended feed), 9:16 (Stories, Reels, TikTok), 16:9 (YouTube, display), 21:9 (ultrawide desktop), 1:4 and 4:1 (skyscrapers and leaderboards). Google's Nano Banana 2 documentation lists 14 native aspect ratios including the wide ones (21:9), tall ones (1:8), and their inversions — which lets you generate a placement-native asset instead of cropping a 1:1 into a 9:16 and losing the subject.

GPT Image 2's public size set is three: 1024×1024, 1024×1536, and 1536×1024 — essentially 1:1, 2:3, and 3:2. That covers most feed and story placements after a crop, but for YouTube masthead (21:9), ultrawide display banners, or non-standard placements, Nano Banana 2's wider menu is meaningfully less work.

Round 6 — Directability and native reasoning

Winner: GPT Image 2, by a healthy margin — and this is the sleeper advantage.

GPT Image 2 — Hero bottle + seven numbered sample vials arranged as specified.

Nano Banana 2 — Same constraint-heavy brief, same aspect.

OpenAI's ChatGPT Images 2.0 launch post describes GPT Image 2 as their "first image model with native reasoning built into the architecture." Via ChatGPT and the API, GPT Image 2 exposes two modes: Instant mode (available to every user including free tier) and Thinking mode (the reasoning tier — accessible to Plus, Pro, Business, and Enterprise subscribers, and to all API developers). Thinking mode adds three production-relevant capabilities: web search during generation (so the model can ground on current product specs, competitor creative, or seasonal references), multi-image batching up to 8 in a single request, and output self-verification (the model checks its own render against the brief before returning).

In the outputs above, both models deliver photorealistic scenes. GPT Image 2 positioned the hero bottle in the foreground left and arranged the sample vials in a rough semi-circle behind it, closer to the literal brief. Nano Banana 2 split the vials into a left/right grouping around the hero, which is also a reasonable interpretation but less literal to the "behind in a semi-circle" constraint. For long constraint lists — the kind that show up in retainer briefs, compliance-sensitive ads, or carefully framed hero campaigns — GPT Image 2 in Thinking mode is more surgical.

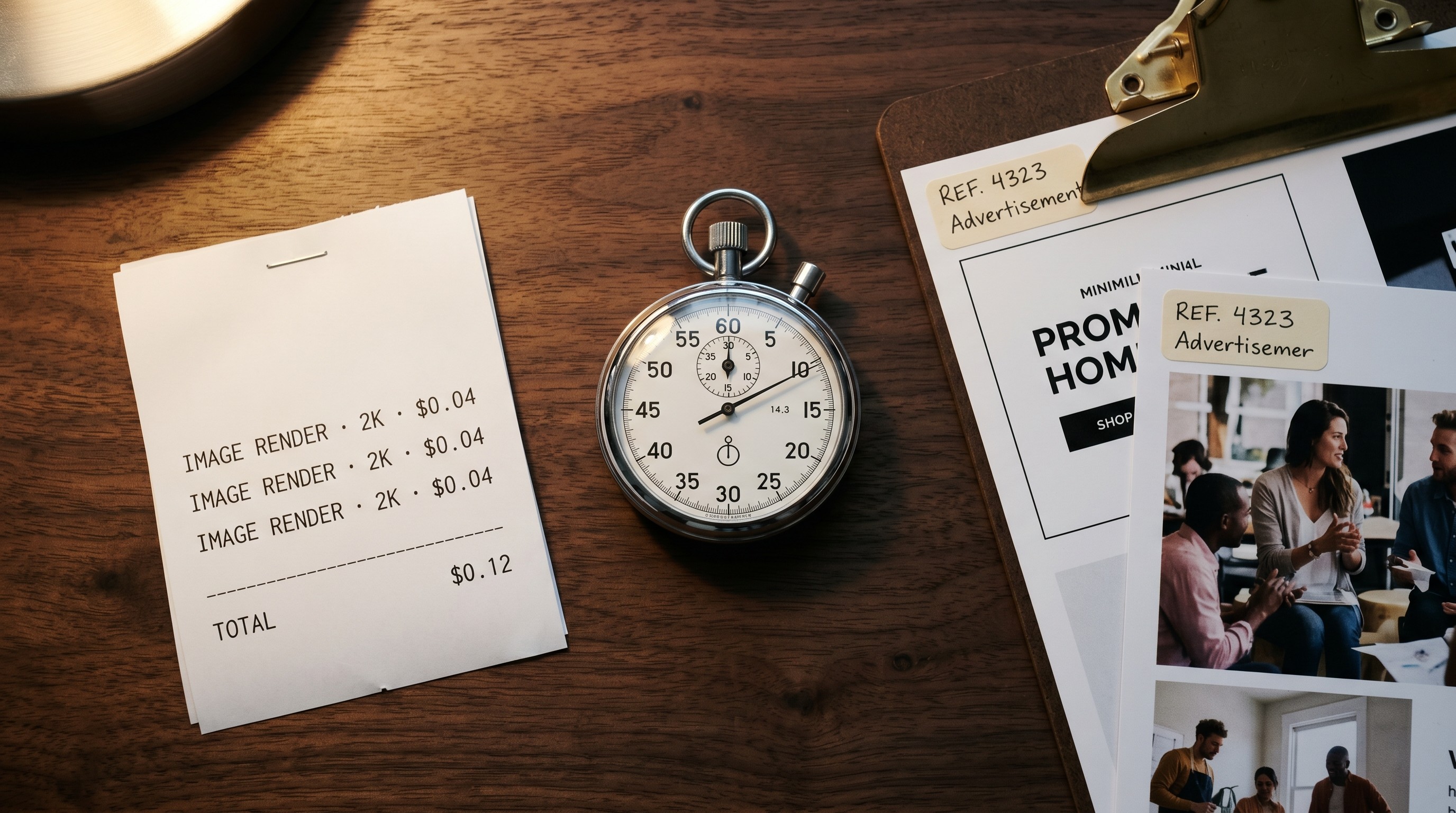

Round 7 — Cost and throughput

No clear winner — it depends on what you're rendering.

GPT Image 2 — Fine-grain small-numeric typography test.

Nano Banana 2 — Same brief.

Here are the current official per-image numbers, straight from OpenAI and Google's pricing pages (accurate at time of writing):

| Model | Size | Approximate cost per image |

|---|---|---|

| GPT Image 2 | 1024×1024 (low quality) | ~$0.006 |

| GPT Image 2 | 1024×1024 (medium quality) | ~$0.053 |

| GPT Image 2 | 1024×1024 (high quality) | ~$0.211 |

| Nano Banana 2 | 512px (0.5K) | $0.045 |

| Nano Banana 2 | 1024×1024 (1K, default) | $0.067 |

| Nano Banana 2 | 2K | $0.101 |

| Nano Banana 2 | 4K | $0.151 |

GPT Image 2 is token-billed — $8 per million image-input tokens and $30 per million image-output tokens — so the per-image numbers above are approximations for a 1024×1024 render at each quality tier. Nano Banana 2 is $60 per million output tokens, converted to the per-image rates Google publishes on its pricing page.

Two honest takeaways:

- At low-quality previews, GPT Image 2 is cheaper per image than Nano Banana 2. If your workflow is "generate a 1024×1024 thumbnail, iterate on composition, then upscale or regenerate at final quality," GPT Image 2 low-quality drafting is hard to beat on cost.

- At production quality, the two are in the same ballpark. A high-quality 1024×1024 GPT Image 2 render is approximately $0.21; a 2K Nano Banana 2 render is approximately $0.10, and a 4K is approximately $0.15. Nano Banana 2 wins on cost-per-pixel at native resolution; GPT Image 2 wins on cost-per-draft.

For high-volume iteration ("draft 100 variants, pick 10, render those at final quality"), a mixed pipeline — GPT Image 2 for cheap drafts + Nano Banana 2 for final high-res renders when you need 21:9 or 4K — is usually the cheapest path.

Which model, which job

After the rounds above, the decision matrix writes itself:

| If your blocker is... | Use this model |

|---|---|

| Burned-in headlines, price stickers, promo badges, dense copy | GPT Image 2 |

| Your actual product, logo, or pack shot appearing in scene | Nano Banana 2 |

| Same character / creator / mascot across a campaign | Nano Banana 2 |

| Round 2+ edits with conversational context | Nano Banana 2 |

| Inpainting or masked edits on a single canvas | GPT Image 2 (v1/images/edits) |

| Complex composite scenes with many specific constraints | GPT Image 2 (Thinking mode) |

| Non-standard aspect ratios (21:9, 1:4, 4:1, 8:1) | Nano Banana 2 |

| Web-grounded generation for current events / trends | GPT Image 2 (Thinking mode with web search) |

| Cheap low-quality drafting at volume | GPT Image 2 (low quality) |

| Native 4K production renders | Nano Banana 2 |

| Single hero brand image for a landing page or launch | Either — test both |

| 100-variant modular batch with headline overlays | GPT Image 2 for the layer with text, Nano Banana 2 for the product layer, composite in post |

The hybrid workflow we actually ship

The team-sized answer is not "pick one." It is a two-stage pipeline that uses each model for what it does best. This maps directly onto the modular Hook/Body/CTA framework from our 100 ad variants in one hour playbook.

Stage 1 — Product and character layer (Nano Banana 2).

Feed your pack shot, your brand colors, and your lifestyle reference as inputs. Generate clean, consistent product-in-scene or character-in-scene frames at whatever aspect ratios your placements need — and go direct to 2K or 4K when the placement calls for it. Lock the subject; the background variants are cheap. Save a library of 10–20 scene frames per campaign.

Stage 2 — Typography and overlay layer (GPT Image 2).

For anything with burned-in text — promotional banners, price callouts, "limited time" badges, holiday seasonal overlays — generate those as transparent-friendly layers or composite them into Nano Banana scenes. GPT Image 2's typography reliability saves a designer's afternoon per batch. When the brief is long and constraint-heavy, use Thinking mode.

Stage 3 — QA and fatigue monitoring.

This is where performance data closes the loop. Track hook score, click score, and conversion score by variant. Retire fatigued creative fast — TikTok at 7 days, Meta at ~14 — and feed winners back into Stage 1 and Stage 2 prompts. See our AI ad creative ROI measurement guide for the full metric stack.

For the strategic layer — which angle to test, which creative to retire, which audience to push budget into — pair the model output with a cross-channel intelligence layer like Soku AI that watches performance across Meta, Google, and TikTok and tells your team where the next variant should be pointed before you ever open the generator.

A note on reproducibility and provenance

One quiet consequence of using both models is that your creative archive needs to track which model made which asset. Keep the model ID (gpt-image-2 or gemini-3.1-flash-image-preview), the snapshot version (e.g. gpt-image-2-2026-04-21), the prompt, the reference images, and the seed (where available) in the filename or metadata. When a creative outperforms, you want to be able to reproduce it — or reproduce its recipe — six months later.

Both models embed provenance metadata: GPT Image 2 output carries C2PA content credentials; Nano Banana 2 output carries SynthID plus C2PA. That is a gift to any team that needs to audit where a shipped creative came from, and it is worth preserving through your compositing and upload pipeline instead of stripping it out.

Bottom line

GPT Image 2 is the best model available today for typography, long-constraint briefs, and cheap low-quality drafting. Nano Banana 2 is the best model available today for brand fidelity, character consistency, native 4K, non-standard aspect ratios, and iterative editing. Ad creative at scale needs both.

The teams pulling ahead right now are not the ones that picked the "right" model — they are the ones that stopped treating this as a pick and started treating it as a pipeline. Use each tool for what it is great at, let performance data steer the next variant, and keep shipping.

Want more on the strategic layer? Our 100 ad variants in one hour guide shows the modular framework that turns either model into a volume engine, and the best AI tools for Meta ad creatives roundup covers the generation, analytics, and automation stack around them.